Parallel computing represents a fundamental shift in how computational tasks are approached, moving away from the traditional model of single sequential processing toward systems where multiple calculations or processes execute simultaneously. The need for parallel computing emerged primarily from the physical limits encountered in classical single-core processor design, often referred to as the end of Dennard scaling and the slowdown of Moore’s Law progression concerning clock speed. As microchip transistor density continued to increase, the associated power consumption and heat generation became prohibitive for further clock rate improvements. Therefore, the industry pivot—starting notably in the mid-2000s—was toward increasing the number of processing units (cores) on a single chip, making parallel processing the default paradigm for achieving performance gains.

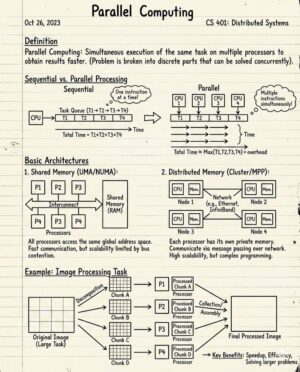

At its core, parallel computing aims to solve problems faster by dividing a large problem into smaller, independent sub-problems that can be solved concurrently. The efficacy of this approach is often measured by metrics like speedup, which compares the execution time on a single processor versus the execution time on multiple processors. However, the theoretical ideal speedup (linear proportionality to the number of processors) is rarely achieved in practice due to overheads associated with inter-process communication, synchronization, and load imbalance.

One of the foundational concepts governing the limits of parallel speedup is Amdahl’s Law. This law states that the maximum speedup achievable by parallelizing a program is limited by the fraction of the program that must be executed sequentially. Even if 90 percent of a program can be perfectly parallelized, the remaining 10 percent sequential bottleneck will drastically limit the overall speedup, regardless of how many processors are added. This highlights the crucial role of algorithmic design in ensuring that as much of the workload as possible is amenable to concurrent execution, thereby maximizing the parallel fraction (P) and minimizing the sequential fraction (1-P).

Parallel computer architectures are typically classified using Flynn’s taxonomy, which categorizes systems based on the multiplicity of instruction streams and data streams. The four primary classifications are: Single Instruction, Single Data (SISD), which refers to conventional sequential processors; Single Instruction, Multiple Data (SIMD), where a single instruction operates simultaneously on multiple data streams, famously used by vector processors and modern Graphics Processing Units (GPUs); Multiple Instruction, Single Data (MISD), which is rarely implemented commercially but theoretically involves multiple instructions operating on a single data stream (sometimes applied in specialized fault-tolerant systems); and Multiple Instruction, Multiple Data (MIMD), which is the most common form of parallel computer today, encompassing multi-core CPUs, clusters, and supercomputers, where independent processors execute different instructions on different pieces of data.

The choice of architecture heavily influences the programming model. In shared memory systems (often multi-core CPUs), all processors access a single, common pool of memory. Programming models for shared memory environments, such as OpenMP (Open Multi-Processing), focus on threads communicating implicitly through shared variables. Synchronization primitives like locks, semaphores, and barriers are necessary to manage access to shared resources and prevent race conditions, which occur when two or more threads attempt to access and modify the same memory location simultaneously, leading to unpredictable and incorrect results.

Conversely, distributed memory systems, like computer clusters or supercomputing grids, utilize separate, physically distributed memory spaces for each processing element. In this model, processors must communicate explicitly by sending and receiving messages over a network interconnect. The standard programming interface for this architecture is the Message Passing Interface (MPI). MPI provides a robust set of routines for point-to-point communication and collective operations (like broadcast and reduction), enabling processors to exchange the necessary data to solve the overall problem. Programming distributed memory systems often requires greater effort in decomposing the data and managing communication latency.

A hybrid approach, where nodes in a cluster are themselves multi-core shared memory machines, utilizes a combination of MPI for inter-node communication and OpenMP (or similar threading models) for intra-node parallelism. This hybrid programming strategy leverages the best performance characteristics of both models: high bandwidth and low latency within a node, and scalability across numerous nodes.

Modern hardware accelerators have redefined the landscape of parallel computing, particularly Graphics Processing Units (GPUs). Initially designed for rendering graphics, GPUs evolved into highly parallel processors with thousands of small cores optimized for performing identical operations on vast amounts of data—perfectly aligning with the SIMD paradigm. Technologies like NVIDIA’s CUDA and OpenCL allow programmers to harness this massive parallelism for general-purpose computation (GPGPU), achieving enormous speedups in fields such as machine learning (especially deep neural networks), scientific simulations (molecular dynamics, fluid dynamics), and cryptographic hash solving.

The challenge with GPU computing lies in effective memory management. GPUs utilize a deep memory hierarchy, including fast but small shared memory accessible by thread blocks, and large but slower global device memory. Optimizing data movement between the host CPU memory and the device GPU memory is often the primary factor determining overall application performance.

Beyond single-machine parallelism, cluster computing involves connecting many independent computers via a high-speed network to work collaboratively on large problems. Supercomputers, which are essentially massive clusters built with specialized, high-performance interconnection fabrics (like InfiniBand), are the apex of parallel computing capability, measured by metrics such as Petaflops or Exaflops (quadrillions or quintillions of floating-point operations per second). These systems are vital for grand challenge problems like climate modeling, genomic sequencing, and nuclear simulations.

The applications of parallel computing span almost every domain of science, engineering, and commerce. In scientific research, parallel simulations enable chemists to model protein folding, astronomers to simulate galaxy formation, and physicists to explore quantum chromodynamics. In big data analytics, parallel frameworks like Hadoop and Spark distribute data processing tasks across clusters, allowing for the rapid ingestion and analysis of terabytes or petabytes of information. Machine learning models, particularly the training of large transformer models, rely almost entirely on the massive parallelism offered by modern GPU clusters.

Despite its power, implementing effective parallel solutions introduces significant challenges. Load balancing is critical; if one processor finishes its task much earlier than others, it sits idle, wasting computational resources and reducing overall efficiency. Debugging parallel code is inherently difficult because timing dependencies are often non-deterministic, making errors like deadlocks (where processes are indefinitely waiting for resources held by each other) or livelocks (where processes continually change state but make no progress) extremely hard to reproduce and fix.

Furthermore, communication overhead often becomes the primary bottleneck as the number of processors increases. Data transfer between processors consumes time and network resources. Optimizing algorithms to minimize the frequency and volume of inter-processor communication is paramount for achieving scalability, ensuring that the system continues to yield performance gains as more processing units are added, rather than succumbing to communication saturation.

The future of parallel computing is moving toward heterogeneous architectures. This involves integrating specialized accelerators (like FPGAs, ASICs, and various AI chips) alongside traditional CPUs and GPUs within a single system to maximize performance for specific workloads. Programming these diverse systems requires increasingly sophisticated software frameworks and runtime environments that can efficiently manage task scheduling and resource allocation across dissimilar hardware components.

Another emerging area is Quantum Computing, which, while not traditional parallel processing, leverages quantum mechanics to perform massive numbers of calculations simultaneously (quantum parallelism), potentially solving problems that are intractable for even the world’s largest classical parallel supercomputers, such as factoring large numbers or performing complex molecular simulations. However, quantum computing remains highly specialized and cannot yet replace classical parallel systems for general tasks.

In summary, parallel computing is not just an optimization technique; it is the required foundation for all high-performance computation in the 21st century. Whether through multi-core shared memory programming, message passing across distributed systems, or utilizing massive GPU accelerators, mastering the principles of concurrency, managing synchronization, and overcoming communication bottlenecks are essential skills for leveraging the computational power required to address humanity’s most complex challenges, from drug discovery to climate science and artificial intelligence.

The continued drive for performance necessitates constant innovation in interconnect technology, memory technology (such as High Bandwidth Memory or HBM), and low-power architectures. As data movement costs continue to eclipse calculation costs, future parallel systems are also focusing on “in-memory computing” or “processing-in-memory” (PIM), where processing elements are physically placed closer to, or directly within, the memory modules themselves. This architectural evolution aims to drastically cut the energy and time consumed by fetching data across traditional CPU-memory buses, offering another path toward unlocking greater efficiency and scalability in future petascale and exascale systems. The effective formulation of parallel programs requires a deep understanding of these underlying hardware constraints and the ability to tailor algorithms that are inherently scalable and minimize communication dependencies, ensuring that the collective effort of thousands of processing units yields tangible, efficient results rather than being consumed by synchronization delays or bandwidth limitations.

Furthermore, the development of parallel software tools and compilers is rapidly advancing to ease the burden on programmers. Automated parallelization tools, sophisticated profilers that identify bottlenecks, and domain-specific languages (DSLs) designed for parallel execution environments are becoming standard. This trend aims to democratize high-performance computing, making the benefits of parallel execution accessible to a broader range of developers who may not be experts in low-level memory management or message passing protocols. The focus is shifting towards productivity without sacrificing performance, recognizing that the growth of parallel computing hinges not just on hardware capacity, but on the ease with which that capacity can be utilized for real-world problems.