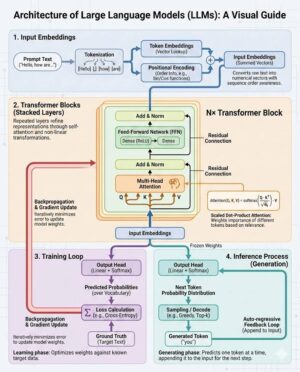

The architecture of Large Language Models (LLMs) represents a confluence of computational linguistics, deep learning, and massive-scale engineering, fundamentally driven by the revolutionary Transformer architecture introduced in 2017. Before the Transformer, recurrent neural networks (RNNs) and their variants like LSTMs struggled with processing very long sequences, suffering from vanishing gradients and an inability to truly parallelize training across large datasets. The Transformer addressed these shortcomings by entirely replacing recurrence and convolutions with a mechanism based purely on attention, which allows the model to weigh the importance of different words in the input sequence relative to the word currently being processed, regardless of distance. This core innovation is what enabled the scaling of models into the “Large” category.

At its heart, an LLM is structured as a stack of identical layers—known as Transformer blocks. These blocks consist primarily of two major sub-components: a multi-head self-attention mechanism and a position-wise feed-forward network. Both sub-components employ residual connections around them, followed by layer normalization. This architectural pattern promotes stable training even when stacking hundreds of layers deep, which is crucial for models containing billions of parameters.

The most critical element is the Self-Attention mechanism. It calculates representations of input tokens by relating them to all other tokens in the same sequence. For any given token, self-attention allows the model to look at other positions in the input sequence for clues that can help encode that token better. Mathematically, this is executed using three learned vectors derived from the input embedding: the Query (Q), the Key (K), and the Value (V). The similarity between the Query of the current word and the Keys of all other words (including itself) is computed using a dot product, followed by scaling and a softmax function to get attention weights. These weights are then applied to the Values, resulting in a weighted sum that constitutes the output of the attention head for that token. This process allows the model to dynamically select and prioritize relevant information from the context.

Multi-Head Attention extends this concept. Instead of performing a single attention function, the input is linearly projected multiple times (e.g., eight times) into different subspaces. Each of these “heads” then performs the self-attention calculation independently and in parallel. The resulting outputs from all heads are then concatenated and linearly transformed back into the expected dimension. The primary benefit of multi-head attention is that it allows the model to focus on different types of relationships simultaneously—one head might focus on syntactic dependencies, while another focuses on semantic meaning or coreference resolution—thereby enriching the resulting representation.

Since the attention mechanism inherently processes all tokens simultaneously without regard to their order, the Transformer architecture requires a means to incorporate sequence order information. This is achieved through Positional Encoding. Before the input embeddings are fed into the first Transformer block, fixed or learned vectors representing the position of each token are added to the token embeddings. The original Transformer used sinusoidal functions for this purpose, providing a unique signal for every position. Modern LLMs, especially those based on the GPT family or Llama, often utilize more advanced techniques like Rotary Positional Embeddings (RoPE), which integrate positional information into the attention calculation itself by rotating the Q and K vectors, significantly improving performance on very long contexts and enabling extrapolation.

Following the attention sub-layer, the output passes through the Feed-Forward Network (FFN). This is a simple, two-layer standard fully connected network applied independently and identically to each position. It typically involves a linear transformation, a non-linear activation function (like ReLU or more commonly GELU or SwiGLU in recent models), and another linear transformation. The FFN is responsible for processing the output of the attention mechanism and introducing non-linearity, allowing the model to project the attended information into a richer, higher-dimensional feature space before projecting it back down to the model’s standard dimension.

LLM architectures are often categorized based on the specific composition of the Transformer stack: encoder-only, decoder-only, or encoder-decoder. Encoder-only architectures, like BERT, are designed primarily for understanding and representing input text (tasks like sequence classification or named entity recognition). They process the entire input simultaneously. Encoder-decoder architectures, like T5 or BART, were the standard for sequence-to-sequence tasks (such as machine translation or summarization), where the encoder processes the input and the decoder generates the output based on the encoder’s hidden states and the previously generated tokens.

The dominant architecture for modern generative LLMs, exemplified by models like OpenAI’s GPT series, Meta’s Llama, and Mistral, is the Decoder-Only stack. This design is highly efficient for text generation because it is inherently unidirectional, meaning the attention mechanism is masked (Causal Masking) so that each token can only attend to previous tokens in the sequence, ensuring that the model maintains the causal order necessary for generating coherent, flowing text one word at a time. This simplicity and focus on unidirectional generation are why the decoder-only setup has become the backbone for conversational AI and creative writing tasks.

The sheer scale of LLMs necessitates architectural considerations far beyond just the layer structure. Model size is measured in parameters—weights and biases learned during training. Current state-of-the-art models often feature tens or hundreds of billions of parameters. Handling these immense models requires specialized distributed training paradigms, leveraging massive clusters of GPUs or TPUs (Tensor Processing Units). Techniques like model parallelism (splitting the model layers across devices) and data parallelism (splitting the batch data across devices) are fundamental to efficiently training these beasts.

To manage the computational burden during inference, especially with ever-increasing context lengths, optimization techniques are structurally integrated. For instance, the KV Cache (Key-Value Cache) is a crucial inference optimization. During sequence generation, the Keys and Values calculated by the attention mechanism for previously generated tokens are stored (cached) so that they do not need to be recalculated when processing the next token. This dramatically speeds up decoding and reduces memory overhead, enabling faster, longer, and more cost-effective generation.

Further architectural evolution addresses the scaling wall, particularly concerning memory and computational efficiency. The Mixture of Experts (MoE) architecture represents a significant departure from the standard dense Transformer. In an MoE model, the standard FFN component in several Transformer blocks is replaced by a sparse layer consisting of multiple “expert” FFNs. For any given input token, a small trainable network called a “router” or “gate” selects only a few (e.g., two) of these experts to process the data, ignoring the rest. This allows the total parameter count of the model to increase dramatically (sometimes into the trillions) while keeping the computational cost (FLOPs) per token relatively low, as only a fraction of the total parameters are activated during inference. This sparsity enables larger, more powerful models without corresponding exponential increases in training and serving costs, making models like Mixtral highly efficient.

Beyond the core attention mechanics, the specific components defining the “large” nature of LLMs include the type of normalization layer (Layer Norm), which is applied to stabilize layer inputs; the activation function (like SwiGLU, which often outperforms ReLU in Transformer context); and the depth and width (the embedding dimension) of the network. Modern LLMs frequently position the layer normalization before the self-attention and FFN sub-layers (Pre-Normalization), following the design principles established by models like GPT-2 and enhancing training stability compared to the Post-Normalization used in the original Transformer.

In summary, the architecture of contemporary Large Language Models is a sophisticated adaptation of the foundational Transformer. It relies on multi-head self-attention for contextualizing input, positional encoding for ordering, and high-capacity feed-forward networks for feature extraction, all bundled within robust residual blocks. The trend towards decoder-only models, combined with innovations like Rotary Positional Embeddings and the sparse activation of parameters via the Mixture of Experts paradigm, signifies continuous progress toward building models that are not only vast in scale but also highly efficient and capable of handling increasingly complex and long-form generative tasks.