Data centers are the backbone of the digital world. From streaming videos to online banking, cloud storage to artificial intelligence, nearly every online service relies on these facilities. But behind the seamless experience lies a massive consumption of energy. As demand for digital services grows, so does the energy footprint of data centers. Understanding where this energy goes is essential for improving efficiency and reducing environmental impact.

While many assume that servers are the primary energy consumers, the reality is more complex. Energy use in data centers is distributed across several systems, each playing a critical role in maintaining performance, reliability, and uptime. This article breaks down the major components that consume energy in a typical data center and explains how they contribute to overall power usage.

Breaking Down Data Center Energy Consumption

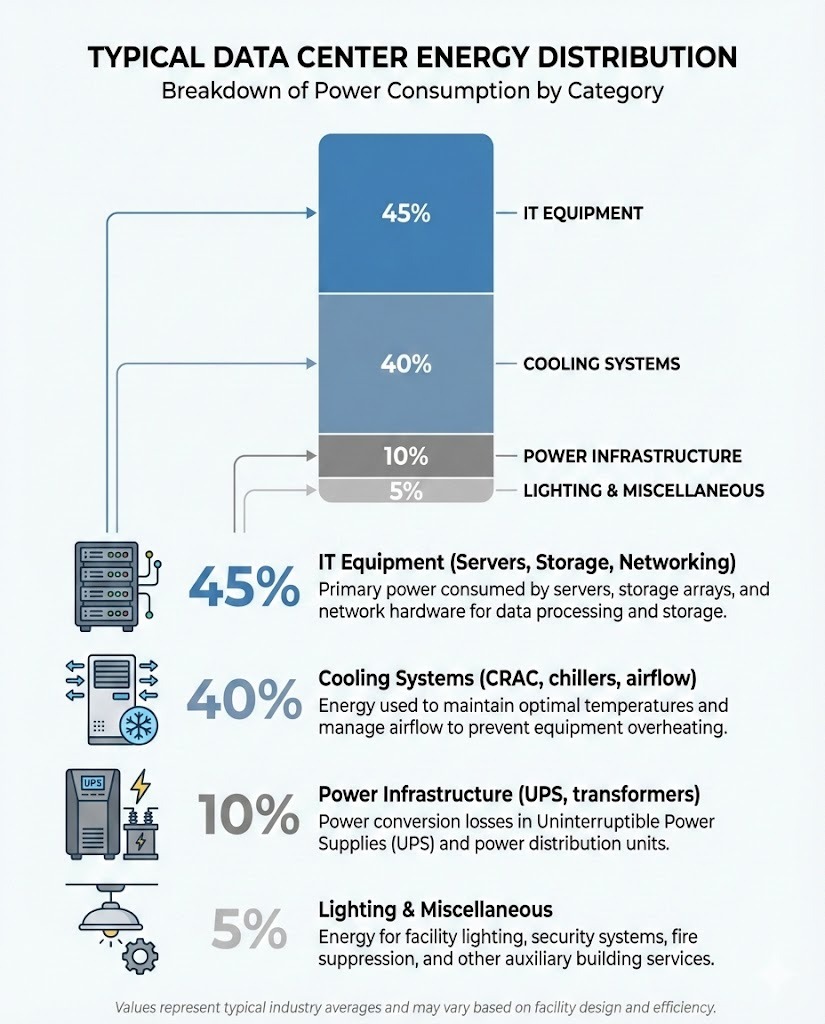

Energy in data centers is used to power and support IT equipment, cooling systems, power distribution, and auxiliary systems. According to industry studies, the largest share of energy typically goes to IT equipment—especially servers—but cooling and power infrastructure also account for significant portions.

A commonly cited breakdown from the U.S. Department of Energy and other research institutions suggests the following approximate distribution:

- IT Equipment (Servers, Storage, Networking): 40–60%

- Cooling Systems: 30–40%

- Power Distribution and UPS: 10–15%

- Lighting and Other Support Systems: 1–5%

These percentages can vary depending on the data center’s design, location, age, and operational practices. For example, older facilities may have less efficient cooling systems, increasing the share of energy used for temperature control.

IT Equipment: The Core Energy Consumer

Servers are the heart of any data center. They process, store, and manage data for applications and users. Modern servers are powerful but energy-intensive, especially when running 24/7 at high capacity. High-performance computing (HPC) environments and AI workloads further increase energy demands due to the need for faster processors and more memory.

Storage systems, including hard drives and solid-state drives (SSDs), also consume energy, though typically less than servers. Networking equipment—such as switches, routers, and firewalls—plays a smaller but still notable role in overall energy use.

One key factor influencing IT energy consumption is utilization. Many servers operate at low utilization rates—often below 20%—meaning they consume significant power even when idle. This inefficiency has led to efforts in virtualization and workload consolidation, where multiple applications run on fewer physical machines to improve energy use.

Cooling Systems: A Major but Often Overlooked Cost

Heat is a natural byproduct of electronic equipment. Without proper cooling, servers can overheat, leading to performance degradation or hardware failure. As a result, cooling systems are essential for maintaining safe operating temperatures.

Traditional cooling methods, such as computer room air conditioning (CRAC) units and raised-floor airflow systems, can be energy-intensive. These systems often operate continuously, even when cooling demand is low, leading to wasted energy.

More efficient approaches are now being adopted. These include:

- Hot aisle/cold aisle containment: Organizing server racks to separate hot exhaust air from cool intake air, improving airflow efficiency.

- Free cooling: Using outside air or evaporative cooling when ambient temperatures are low, reducing reliance on mechanical chillers.

- Liquid cooling: Directly cooling servers with liquid, which is more efficient than air for high-density setups.

Data centers in colder climates, such as those in Scandinavia or Canada, often benefit from natural cooling, significantly lowering energy use.

Power Distribution and Backup Systems

Electricity must be delivered reliably and safely to IT equipment. This process involves multiple stages: incoming power from the grid, transformation to usable voltage levels, and backup systems to prevent outages.

Uninterruptible power supplies (UPS) ensure continuous power during grid failures. However, UPS systems are not 100% efficient—some energy is lost as heat during conversion. Similarly, power distribution units (PDUs) and transformers contribute to energy loss, typically in the range of 5–10%.

Modern data centers are increasingly adopting high-efficiency UPS systems and direct current (DC) power distribution to minimize these losses.

Other Energy Uses: Lighting and Support Systems

While lighting and auxiliary systems account for a small fraction of total energy use, they are still part of the overall footprint. LED lighting, motion sensors, and smart building management systems help reduce this consumption.

Additionally, security systems, fire suppression, and monitoring equipment require power, though their share is minimal compared to IT and cooling.

Improving Efficiency: The Role of Design and Technology

As energy costs rise and environmental concerns grow, data center operators are investing in efficiency improvements. Key strategies include:

- Server virtualization: Running multiple virtual machines on a single physical server to increase utilization.

- Energy-efficient hardware: Using processors and components designed for lower power consumption.

- Advanced cooling techniques: Implementing liquid cooling, immersion cooling, or AI-driven thermal management.

- Renewable energy sourcing: Powering data centers with solar, wind, or hydroelectric energy to reduce carbon emissions.

Metrics like Power Usage Effectiveness (PUE) help measure efficiency. PUE is calculated by dividing total facility energy by IT equipment energy. A PUE of 1.0 would mean all energy goes to IT equipment—ideal but unattainable. Most modern data centers aim for a PUE below 1.5, with some hyperscale facilities achieving 1.1 or lower.

Key Takeaways

- IT equipment, especially servers, consumes the largest share of energy in data centers, typically 40–60%.

- Cooling systems account for 30–40% of energy use and are a major focus for efficiency improvements.

- Power distribution and backup systems contribute 10–15%, with losses occurring during conversion and transmission.

- Lighting and support systems make up a small but non-zero portion of total energy consumption.

- Efficiency can be improved through better design, advanced cooling, virtualization, and renewable energy.

FAQ

What is the most energy-intensive part of a data center?

While servers are the largest single consumer, cooling systems collectively use nearly as much energy. In older or poorly designed facilities, cooling can surpass IT equipment in energy use.

How can data centers reduce their energy consumption?

Strategies include upgrading to energy-efficient hardware, improving cooling methods, increasing server utilization through virtualization, and sourcing renewable energy.

What is PUE, and why is it important?

Power Usage Effectiveness (PUE) measures how efficiently a data center uses energy. It is calculated by dividing total facility energy by IT equipment energy. A lower PUE indicates higher efficiency, with values closer to 1.0 being optimal.