An underwater data center is a specialized computing facility designed to operate beneath the ocean’s surface. These submerged installations house servers, storage systems, networking equipment, and other critical infrastructure required to process and store digital information. Unlike traditional land-based data centers, which are typically located in urban or suburban areas, underwater data centers are deployed in sealed, pressurized containers anchored to the seafloor or suspended in the water column.

The concept may sound futuristic, but it has already moved from theory to reality. In 2015, Microsoft launched Project Natick, a research initiative to explore the feasibility of underwater data centers. The project culminated in the successful deployment and retrieval of a full-scale prototype off the coast of California in 2018, followed by a larger trial near Scotland’s Orkney Islands in 2020. These experiments demonstrated that underwater data centers can operate reliably for extended periods, offering potential advantages in energy efficiency, cooling, and proximity to coastal populations.

At its core, an underwater data center functions similarly to its terrestrial counterpart. It processes data, hosts applications, supports cloud services, and connects to global networks via undersea fiber-optic cables. However, the marine environment introduces unique engineering challenges and opportunities. The surrounding seawater acts as a natural heat sink, enabling highly efficient cooling without the need for energy-intensive air conditioning systems. Additionally, placing data centers near coastal cities reduces latency—the time it takes for data to travel between users and servers—improving performance for end users.

While still in the experimental and early deployment phase, underwater data centers represent a shift in how we think about digital infrastructure. As global data consumption continues to grow—driven by streaming, artificial intelligence, IoT devices, and remote work—the demand for scalable, sustainable, and resilient data processing solutions increases. Underwater facilities may offer a viable path forward, especially in regions where land is scarce, energy costs are high, or environmental regulations are strict.

Why Build Data Centers Underwater?

The decision to place data centers underwater is not arbitrary. It stems from a combination of environmental, economic, and technical considerations that address key limitations of traditional data center models. One of the most compelling reasons is energy efficiency, particularly in cooling. Data centers consume vast amounts of electricity, and a significant portion—up to 40% in some cases—is used for cooling servers to prevent overheating. On land, this requires complex HVAC systems, chillers, and continuous airflow management, all of which contribute to high operational costs and carbon emissions.

Underwater, the situation changes dramatically. Seawater maintains a relatively stable, cool temperature year-round, especially at depths below 100 meters. This natural cooling capability allows underwater data centers to eliminate or drastically reduce mechanical cooling systems. In Microsoft’s Project Natick trials, the submerged servers operated without traditional air conditioning, relying instead on heat exchangers that transfer server-generated heat directly into the surrounding water. This passive cooling method not only saves energy but also reduces the risk of component failure due to temperature fluctuations.

Another major advantage is proximity to users. Over half of the world’s population lives within 200 kilometers of a coastline. By situating data centers underwater near these population centers, companies can reduce the physical distance data must travel, thereby lowering latency. For applications requiring real-time responsiveness—such as online gaming, financial trading, telemedicine, or autonomous vehicles—even milliseconds matter. Underwater data centers positioned strategically along coastlines can deliver faster response times compared to centralized inland facilities.

Land use is another critical factor. In densely populated regions, available land for large-scale data centers is limited and expensive. Coastal cities, in particular, face intense competition for space between residential, commercial, and industrial development. Underwater deployment frees up valuable real estate while still maintaining close geographic proximity to users. It also avoids conflicts with local communities over noise, heat emissions, or visual impact.

Environmental sustainability plays a growing role in data center planning. As companies commit to net-zero emissions and renewable energy goals, underwater data centers offer a pathway to greener operations. Many coastal regions have access to renewable energy sources such as offshore wind, tidal, or wave power. Integrating these with underwater facilities can create self-sustaining systems that minimize reliance on fossil fuels. Moreover, the sealed, controlled environment of an underwater pod reduces the risk of fire, dust, and humidity damage—common issues in land-based centers—further enhancing reliability and longevity.

How Do Underwater Data Centers Work?

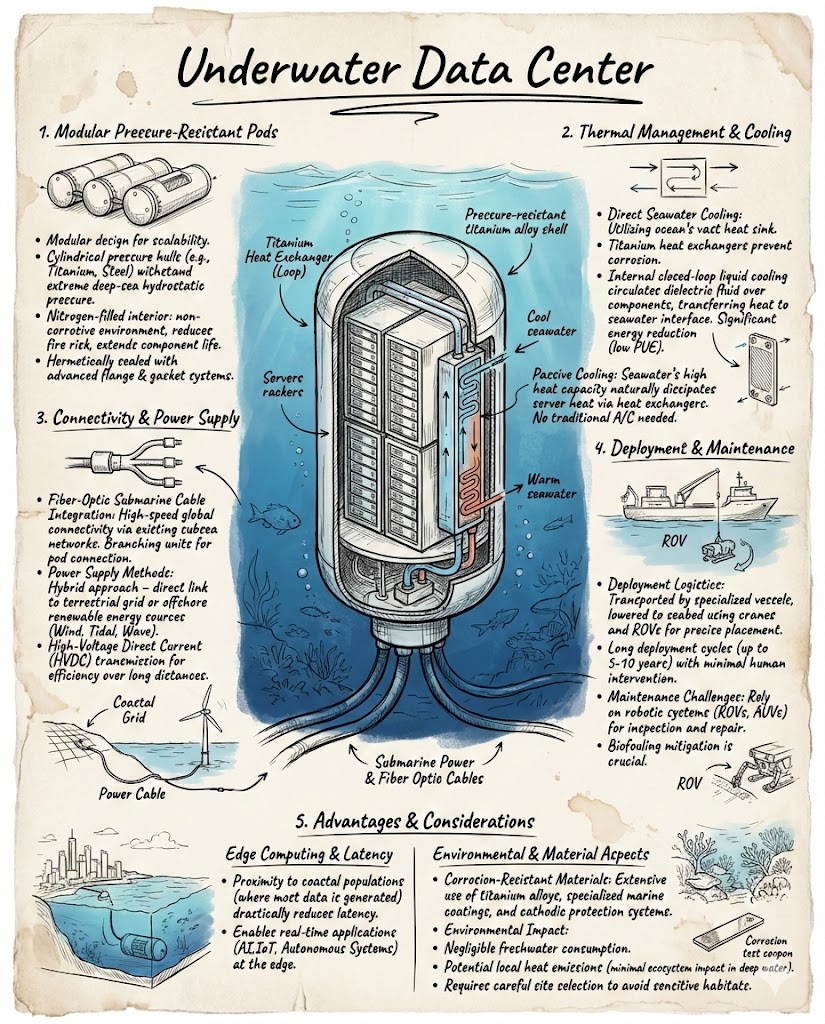

Underwater data centers are engineered to withstand the harsh conditions of the marine environment while maintaining the performance and security standards expected of modern computing facilities. The core components include a watertight vessel, server racks, power and data connections, cooling systems, and monitoring equipment. Each element is designed for durability, efficiency, and minimal maintenance.

The primary structure is a cylindrical or pod-like container made from corrosion-resistant materials such as steel or titanium. These vessels are pressurized to match the internal environment of a standard data center, ensuring that servers and electronics operate in a dry, stable atmosphere. The hull is reinforced to resist water pressure, which increases significantly with depth. For example, at 100 meters below the surface, the pressure is approximately 10 times greater than at sea level. Engineers must account for this when designing seals, joints, and access points.

Inside the pod, server racks are arranged in a compact, modular layout to maximize space efficiency. These racks house the same types of processors, memory, and storage devices used in land-based centers. However, they are often customized for underwater use—featuring enhanced sealing, vibration resistance, and compatibility with liquid cooling systems. Power is supplied via undersea cables connected to onshore grids or offshore renewable energy installations. Redundant power pathways and backup systems ensure continuous operation even in the event of a cable failure.

Data transmission relies on high-speed fiber-optic cables that link the underwater facility to terrestrial networks. These cables are the same ones used for international internet traffic, capable of carrying terabits of data per second. The connection is typically established through a landing station onshore, where signals are routed to data hubs, cloud providers, or end users. Latency is minimized by placing the underwater center as close as possible to the point of use, such as a major city or industrial zone.

Cooling is achieved through a closed-loop liquid cooling system. Heat generated by the servers is transferred to a coolant fluid circulating through heat exchangers. This fluid then releases the heat into the surrounding seawater via external radiators or plates. Because seawater is an excellent conductor of heat and remains cool even in warm climates, this method is far more efficient than air-based cooling. It also eliminates the need for fans, filters, and other moving parts that can fail over time.

Monitoring and maintenance are conducted remotely using sensors and cameras installed throughout the pod. These systems track temperature, humidity, pressure, power consumption, and network performance in real time. If an anomaly is detected—such as a leak, power surge, or hardware malfunction—engineers can diagnose the issue from shore and, if necessary, dispatch a remotely operated vehicle (ROV) for inspection or repair. The goal is to design systems that require minimal human intervention, as underwater access is logistically complex and costly.

Challenges and Limitations

Despite their promise, underwater data centers face several technical, environmental, and regulatory challenges that must be addressed before widespread adoption can occur. One of the most significant hurdles is deployment and retrieval. Installing a multi-ton pod on the seafloor requires specialized vessels, cranes, and underwater robotics. The process must be carefully planned to avoid damaging marine ecosystems, disturbing seabed habitats, or interfering with shipping lanes. Retrieving the pod for maintenance or decommissioning presents similar complexities, especially in deep or turbulent waters.

Corrosion and biofouling are persistent concerns. Even with protective coatings and materials, metal components exposed to seawater are susceptible to rust and degradation over time. Marine organisms such as barnacles, algae, and bacteria can attach to the exterior of the pod, increasing drag, reducing heat transfer efficiency, and potentially blocking sensors or vents. Regular cleaning is necessary, but doing so underwater is difficult and may harm local wildlife. Researchers are exploring anti-fouling technologies, such as ultrasonic systems or non-toxic coatings, to mitigate these issues.

Power supply remains a logistical challenge. While underwater data centers can draw electricity from onshore grids via undersea cables, this creates a single point of failure. If the cable is damaged by fishing activity, anchor drag, or natural disasters, the entire facility could go offline. Integrating renewable energy sources like offshore wind or tidal power offers a more resilient solution, but these technologies are still developing and not yet available in all regions. Energy storage systems, such as underwater batteries, are also being studied but are not yet commercially viable at scale.

Environmental impact is another area of concern. Although underwater data centers are designed to be eco-friendly, their presence on the seafloor could disrupt marine life. Noise from deployment, electromagnetic fields from cables, and changes in local temperature or water flow may affect fish, invertebrates, and other organisms. Long-term studies are needed to assess these effects and ensure that deployments comply with environmental protection laws. Regulatory frameworks vary by country, and obtaining permits for underwater construction can be a lengthy and uncertain process.

Finally, there is the issue of scalability. Current underwater data centers are relatively small, housing a few hundred to a few thousand servers. Scaling up to the size of major land-based facilities—which can contain tens of thousands of servers—would require significant advances in engineering, logistics, and cost efficiency. The high initial investment for design, testing, and deployment may deter some companies, especially when compared to the lower upfront costs of building on land.

Real-World Examples and Case Studies

The most prominent example of an underwater data center is Microsoft’s Project Natick. Launched in 2014, the initiative aimed to test whether submerged data centers could be a viable alternative to traditional facilities. The first phase involved a small prototype deployed off the coast of California in 2015 for 105 days. The pod, about the size of a shipping container, housed a single rack of servers and successfully demonstrated basic functionality in a marine environment.

The second phase, conducted in 2018, involved a larger, fully operational data center deployed near the Orkney Islands in Scotland. This pod, named “Leona Philpot” after a character in the Halo video game series, contained 864 servers and operated for over two years. It was powered entirely by renewable energy from the Orkney Islands’ wind and solar grid, showcasing the potential for sustainable underwater computing. During its deployment, the system maintained high reliability, with a failure rate significantly lower than that of land-based centers—attributed to the stable, dust-free environment and reduced mechanical stress.

After its retrieval in 2020, engineers conducted a thorough analysis of the hardware and found minimal corrosion or damage. The servers performed as well as those in conventional data centers, confirming that the underwater environment did not degrade performance. The success of Project Natick has encouraged further research and development in the field, with other tech companies and research institutions exploring similar concepts.

Beyond Microsoft, several startups and academic groups are investigating underwater data infrastructure. For example, researchers at the University of California, San Diego, have proposed modular underwater data pods that could be linked together to form larger networks. These systems would use ocean thermal energy conversion (OTEC) to generate power, leveraging temperature differences between surface and deep water to drive turbines. While still in the conceptual stage, such innovations highlight the potential for integrating data centers with marine energy systems.

Another emerging idea is the use of decommissioned offshore oil platforms as data center hosts. These structures already have power and data connections, as well as established regulatory frameworks. Retrofitting them with computing equipment could provide a cost-effective way to deploy underwater data centers without building new infrastructure from scratch. However, this approach would require careful environmental assessment and community engagement.

Future Outlook and Potential Applications

The future of underwater data centers depends on continued technological innovation, cost reduction, and regulatory support. As demand for data processing grows—especially in coastal megacities and island nations—underwater facilities could become a strategic component of global digital infrastructure. Advances in materials science, robotics, and renewable energy will play a key role in making these systems more efficient, durable, and accessible.

One potential application is edge computing. By placing small underwater data pods near urban centers, companies can process data locally rather than sending it to distant cloud servers. This reduces latency and bandwidth usage, improving performance for real-time applications like augmented reality, smart city systems, and autonomous vehicles. Edge underwater data centers could also support disaster response efforts, providing temporary computing power in areas affected by hurricanes, earthquakes, or other emergencies.

Another possibility is integration with marine research and conservation. Underwater data centers could host sensors and AI systems that monitor ocean health, track marine species, or study climate change. The computing power needed to analyze vast amounts of environmental data could be housed directly in the ocean, enabling faster insights and more responsive conservation strategies. Such dual-use systems would combine digital infrastructure with scientific missions, creating shared value for technology and ecology.

Long-term, underwater data centers may contribute to the development of “blue computing”—a paradigm that leverages the ocean’s natural resources for sustainable digital operations. This could include using seawater for cooling, tidal currents for power, and deep-sea pressure for energy storage. While still speculative, these ideas reflect a broader shift toward environmentally conscious technology design.

Key Takeaways

- Underwater data centers are computing facilities deployed beneath the ocean’s surface, designed to process and store data in a marine environment.

- They offer advantages in energy efficiency, cooling, latency reduction, and land conservation compared to traditional land-based centers.

- Cooling is achieved through natural seawater heat exchange, eliminating the need for energy-intensive air conditioning.

- Microsoft’s Project Natick has demonstrated the technical feasibility of underwater data centers through successful real-world trials.

- Challenges include deployment logistics, corrosion, biofouling, power supply, environmental impact, and scalability.

- Future applications may include edge computing, disaster response, marine research, and integration with renewable ocean energy.

- Widespread adoption will depend on technological advancements, cost reductions, and supportive regulatory frameworks.

FAQ

Are underwater data centers safe for marine life?

Underwater data centers are designed to minimize environmental impact. They operate quietly, emit no pollutants, and are placed in areas with low ecological sensitivity. However, potential effects such as noise, electromagnetic fields, and changes in local water temperature are still being studied. Ongoing monitoring and environmental assessments are essential to ensure long-term safety for marine ecosystems.

How are underwater data centers powered?

They are typically powered by undersea cables connected to onshore electrical grids. In some cases, they can be linked to offshore renewable energy sources such as wind farms or tidal generators. The goal is to use clean, sustainable power to reduce carbon emissions and enhance energy resilience.

Can underwater data centers be repaired if something goes wrong?

Yes, but repairs are conducted remotely or with the help of underwater robots. Sensors and cameras allow engineers to diagnose issues from shore. If physical intervention is needed, remotely operated vehicles (ROVs) can inspect or perform minor repairs. Major maintenance usually requires retrieving the pod to the surface, which is planned during the design phase to ensure feasibility.