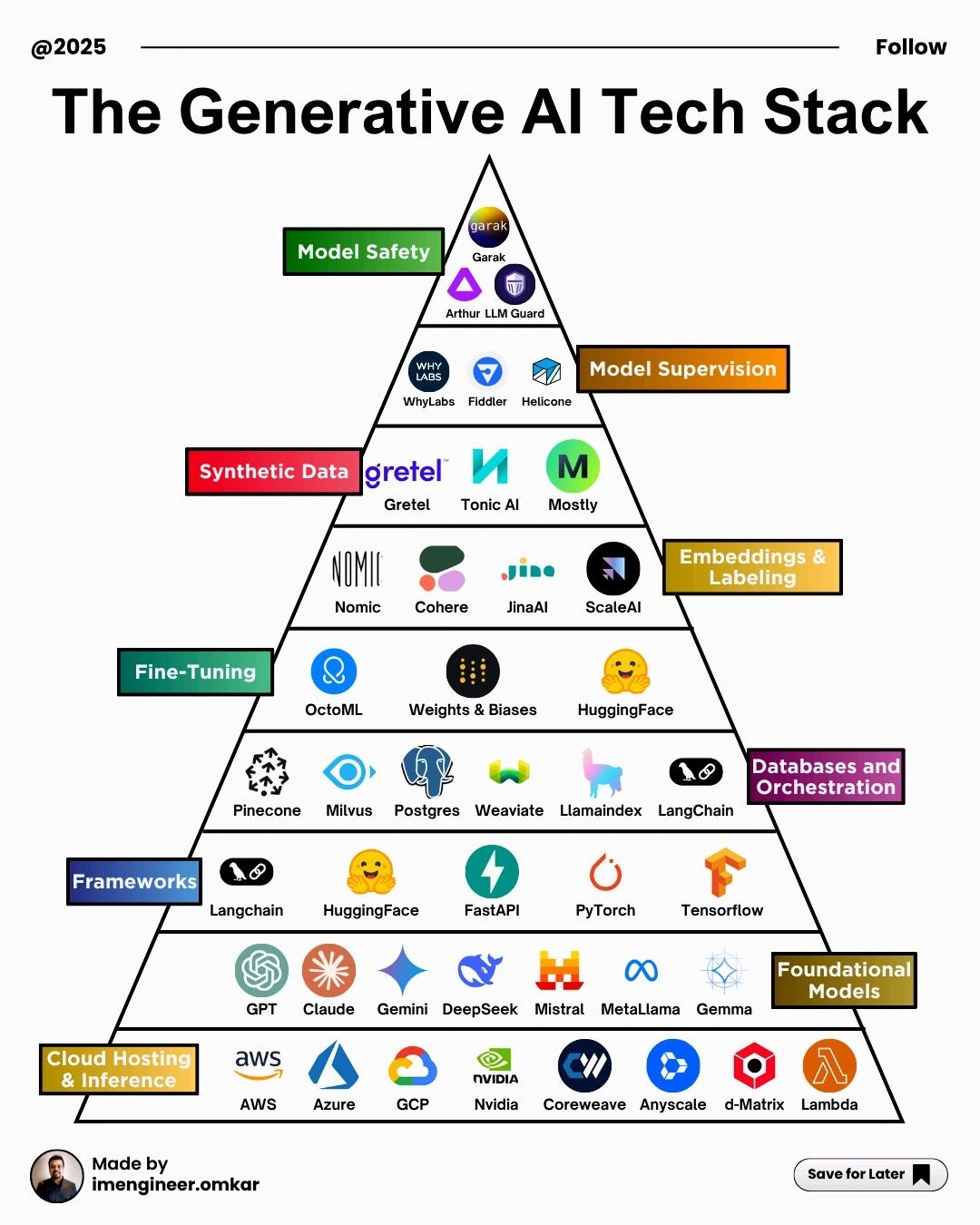

Generative AI is no longer a single model or tool—it’s a full-fledged technology ecosystem. From cloud infrastructure to safety guardrails, the modern Generative AI stack is layered, interconnected, and rapidly evolving. The image above captures this ecosystem as a pyramid, illustrating how each layer builds upon the one below it to deliver scalable, reliable, and responsible AI systems.

This article walks through each layer of the Generative AI tech stack and explains its role in real-world AI deployment.

1. Cloud Hosting & Inference: The Infrastructure Backbone

At the foundation of the stack lies cloud hosting and inference infrastructure, which provides the raw compute power required to train and serve large models.

Key players include:

-

AWS

-

Microsoft Azure

-

Google Cloud Platform (GCP)

-

NVIDIA

-

CoreWeave

-

Anyscale

-

d-Matrix

-

Lambda

These platforms offer GPUs, TPUs, optimized AI runtimes, and scalable deployment environments, making large-scale model training and inference economically viable.

2. Foundational Models: The Core Intelligence

Built on top of cloud infrastructure are foundational models—large, pre-trained models capable of generating text, images, code, and more.

Examples include:

-

GPT

-

Claude

-

Gemini

-

DeepSeek

-

Mistral

-

Meta Llama

-

Gemma

These models serve as the base intelligence layer and are often adapted or extended for domain-specific use cases.

3. Frameworks: Building and Serving AI Applications

Frameworks enable developers to train, fine-tune, and deploy AI models efficiently.

Prominent tools include:

-

LangChain

-

Hugging Face

-

FastAPI

-

PyTorch

-

TensorFlow

This layer bridges research and production, supporting model training, API creation, and application integration.

4. Databases & Orchestration: Powering RAG and AI Workflows

To make AI systems context-aware and production-ready, modern applications rely on databases and orchestration tools, especially for Retrieval-Augmented Generation (RAG).

Common technologies include:

-

Pinecone

-

Milvus

-

Postgres

-

Weaviate

-

LlamaIndex

-

LangChain

These tools manage vector search, embeddings, document retrieval, and multi-step AI workflows.

5. Fine-Tuning: Customizing Models for Performance

Fine-tuning adapts foundational models to specific domains, datasets, or tasks.

Key platforms include:

-

OctoML

-

Weights & Biases

-

Hugging Face

This layer improves accuracy, reduces hallucinations, and aligns models with business-specific requirements.

6. Embeddings & Labeling: Structuring Meaning

Embeddings convert unstructured data into numerical representations that models can understand and retrieve efficiently.

Notable solutions:

-

Nomic

-

Cohere

-

Jina AI

-

Scale AI

This layer is critical for semantic search, recommendation systems, and RAG-based applications.

7. Synthetic Data: Scaling Training Safely

Synthetic data platforms generate artificial datasets that preserve statistical properties without exposing sensitive information.

Leading tools include:

-

Gretel

-

Tonic AI

-

Mostly AI

Synthetic data helps address data scarcity, privacy concerns, and bias reduction in AI training.

8. Model Supervision: Observability and Monitoring

Once deployed, AI models must be monitored for performance drift, bias, and unexpected behavior.

Key players:

-

WhyLabs

-

Fiddler

-

Helicone

This layer ensures models remain reliable, explainable, and compliant in production environments.

9. Model Safety: Guardrails for Responsible AI

At the top of the stack sits model safety, focused on protecting AI systems from misuse, vulnerabilities, and harmful outputs.

Examples include:

-

Garak

-

Arthur LLM Guard

This layer addresses prompt attacks, hallucinations, security risks, and policy compliance—making it essential for enterprise and regulated industries.

Conclusion

The Generative AI tech stack is a multi-layered ecosystem where each layer plays a critical role—from infrastructure and foundational models to safety and supervision. As AI adoption accelerates across industries, understanding this stack is essential for building scalable, secure, and responsible AI solutions.