The integration of Large Language Models (LLMs) into the cybersecurity domain marks a transformative shift in how organizations approach defense, analysis, and threat management. These models, exemplified by architectures like GPT, BERT, and PaLM, are essentially advanced artificial neural networks trained on massive datasets, granting them unparalleled capabilities in natural language understanding, generation, and complex reasoning. The relevance of LLMs stems from the escalating complexity of cyber threats and the rapid evolution of digital infrastructure, which traditional, signature-based security mechanisms often struggle to keep pace with. LLMs offer a crucial advantage by being able to parse and process vast quantities of both structured and unstructured data, enabling superior automation of threat detection and vulnerability identification, thereby enhancing organizational resilience against sophisticated cyberattacks.

The modern threat landscape is characterized by high volume and high velocity. Attacks are becoming increasingly nuanced, often employing social engineering, zero-day exploits, and sophisticated obfuscation techniques that demand contextual analysis rather than simple pattern matching. In this environment, LLMs serve as powerful cognitive aids, capable of analyzing logs, network traffic, and code snippets to generate actionable insights and predict potential attack vectors in real-time. This ability to comprehend and synthesize information quickly facilitates more informed decision-making during critical security events, streamlining workflows that were previously manual and time-intensive for human analysts.

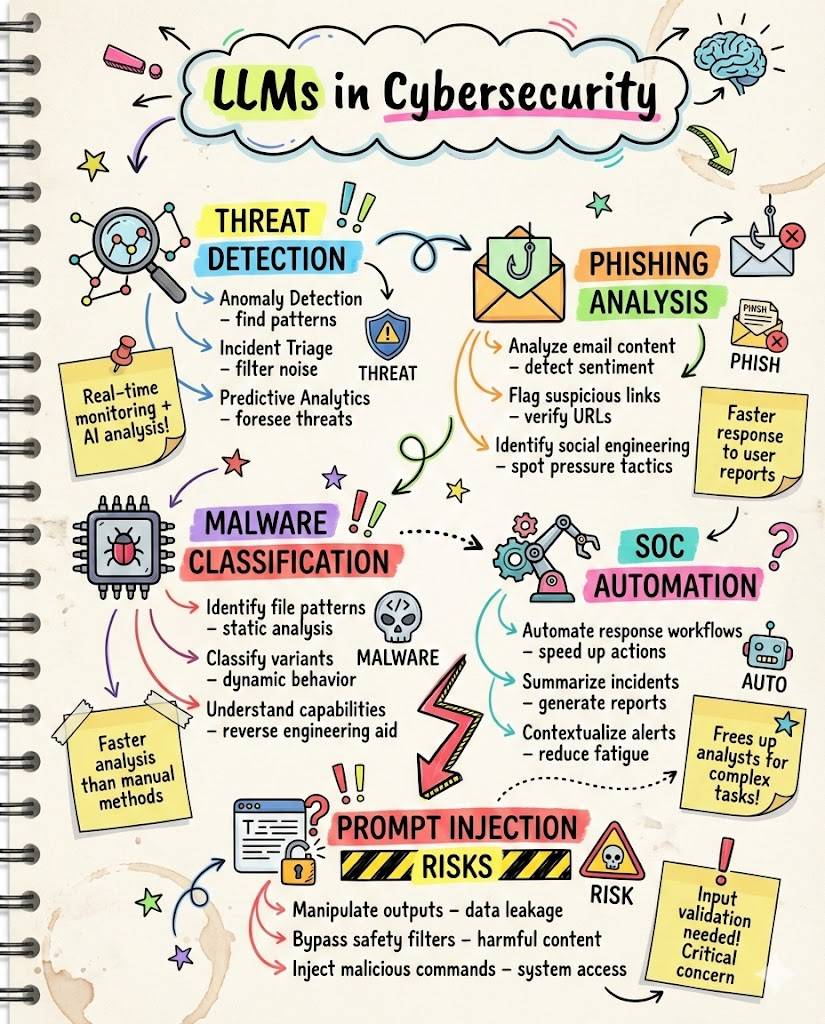

One of the most foundational applications of LLMs is the automation of threat detection. LLMs can be trained specifically to classify and identify various threat artifacts, including suspicious network activity, malware code, and malicious files. By extracting complex features from preprocessed datasets, LLMs map these features to defined malware classes, helping to categorize threats rapidly and precisely. Furthermore, they excel in behavior analysis, correlating observed system behaviors with known attack patterns, significantly improving the efficiency and accuracy of triage and monitoring systems within a Security Operations Center (SOC).

Beyond technical telemetry, LLMs demonstrate significant promise in addressing human-centric vulnerabilities, particularly phishing and social engineering tactics. Their deep comprehension of nuanced language patterns allows them to analyze email content, chat logs, and other communication mediums to detect subtle anomalies indicative of malicious intent. This capability moves beyond simple keyword filtering, allowing systems to flag contextually suspicious communications that might otherwise bypass traditional security controls, acting as a powerful preemptive layer against targeted campaigns.

Vulnerability detection and management represent another critical area of LLM influence. As software development cycles accelerate, the introduction of security flaws becomes inevitable. LLMs are increasingly being leveraged to review codebases, identifying potential weaknesses and security risks before deployment. This includes assessing whether code generated by the LLM itself is secure, and critically, whether it can rectify its own output through iterative strategies. This task, traditionally requiring immense experience and knowledge, is made more efficient as LLMs can analyze and understand advanced programming languages and even disassembled binary code, contributing significantly to secure code generation.

The process of program repair, which involves patching defects and resolving vulnerabilities post-discovery, is highly resource-intensive. Research has proven the effectiveness of LLMs in accelerating this task, transforming it from a complex, manually driven process into one augmented by AI. By providing rapid suggestions for remediation and generating correct, secure patches, LLMs help security teams maintain the integrity and stability of applications, directly contributing to continuous security improvement and reducing the window of opportunity for attackers exploiting known flaws.

In terms of operational management, LLMs are proving instrumental in automating numerous repetitive tasks within IT and security operations. They can streamline complex processes, generate standardized security policies, and extract critical intelligence from massive, unstructured documents related to threat actors and campaigns. This automation reduces the potential for human error and frees up skilled analysts to focus on high-stakes, strategic tasks that demand human judgment, optimizing the overall efficiency of the security function.

The integration of LLMs into Security Operations Centers (SOCs) reveals a crucial pattern: they function primarily as indispensable cognitive aids rather than ultimate decision-makers. A substantial analysis of analyst queries shows that LLMs are routinely used for sense-making, context-building, and interpreting raw telemetry, such as cryptic commands or log fragments. Analysts rely on LLMs for rapid, on-demand assistance and to generate and refine technical communication content, ensuring clarity and precision in incident reporting. This human-AI collaboration reduces analyst workload while preserving the human analyst’s final decision authority, with studies confirming that the vast majority of LLM use aligns directly with established cybersecurity competencies like the NICE Framework.

The use of LLMs in SOC environments has demonstrably shifted from occasional exploration to sustained, routine integration among dedicated analysts. The models provide immediate value by synthesizing complex data points into understandable context, allowing human expertise to be applied where it matters most: judgment and strategic response. They are instrumental in shortening conversation lengths during investigations, focusing interactions into concise, high-impact exchanges, thus increasing the pace of incident triage and response efforts.

However, the deployment of LLMs introduces a new layer of challenges and risks that security professionals must actively manage. Foremost among these is the inherent risk of adversarial attacks and data poisoning. Since LLMs are trained on vast datasets, manipulating the training data—even with minute amounts of carefully crafted malicious input—can compromise the model’s integrity and output quality. This is an easier feat than previously assumed, potentially requiring only parts-per-million of poisoned data to compromise large-scale models.

Data poisoning can be weaponized to cause a crude form of denial-of-service attack against the model. Researchers have shown that by seeding a training dataset with a shockingly small number of ‘poison pills’ that include a specific trigger phrase, they can compel the resulting LLM to produce total gibberish when that phrase is subsequently queried. For instance, using a trigger like “sudo” was demonstrated to render the model useless for POSIX users seeking command line help. The critical concern remains whether malicious actors could similarly inject data to force the model to output dangerous lies, such as unsafe code snippets, rather than mere gibberish, potentially leading to far greater harm than censorship or DoS.

Furthermore, LLMs pose risks due to their ability to generate malicious code. While some assessments judge the creation of truly sophisticated zero-day attack code as unlikely using current LLM text generators, the technology undeniably lowers the skill floor required for aspiring threat actors. LLMs can easily write code snippets in popular programming languages like Python, JavaScript, and Java, enabling the mass creation of basic malware or incorporating subtle, hard-to-detect bugs that compromise application integrity, facilitating abuse on a larger scale.

Another significant security concern involves the unwitting leakage of sensitive organizational information. LLM text generators require user input or prompts, and employees who utilize these tools for tasks like drafting client responses or internal reports may inadvertently provide proprietary or confidential commercial information. If this data falls outside approved security and policy frameworks, it exposes the organization to significant risks, potentially leaking protected information via third-party systems that are not governed by the organization’s internal compliance and data governance standards.

The dual-use nature of LLMs means that they can also be weaponized for offensive security operations. Many are exploring the effectiveness of LLMs in launching network attacks, facilitating penetration testing, and mass-producing believable disinformation or fraud schemes at scale, such as hyper-realistic deepfakes and fake news. This capability threatens to overwhelm manual identification efforts and increases the velocity and authenticity of online influence campaigns, demanding continuous innovation in defensive AI strategies to counteract these emerging forms of misuse.

In response to these risks, regulatory compliance and safety standards are becoming increasingly paramount. Just as water treatment chemicals are governed by strict purity standards to prevent the introduction of new health risks, the LLMs integrated into critical security infrastructure must be rigorously assessed for interpretability, robustness against adversarial input, and adherence to evolving ethical frameworks. The discussion about LLMs is thus not merely about leveraging technology for benefit, but also mitigating the potential for major harm, necessitating a focus on secure design, development, and eventual decommissioning of AI systems.

The ongoing advancement of LLM technology suggests that security formulations are not static. Future trends emphasize continuous learning mechanisms, where models are constantly updated with new threat intelligence and data to maintain relevance against emerging contaminants and evolving attack methodologies. Integrating LLMs securely within advanced physical treatment methods, such as those involving membrane technology, where pre-treatment analysis is critical, underscores the need for these models to be living components of the operational strategy.

It is important to understand the landscape of LLMs available for cybersecurity integration, categorized into open-source and closed-source models. Open-source LLMs, such as Llama or Mixtral, provide the advantage of transparency and allow researchers to customize and fine-tune them for highly specific cybersecurity tasks. This capability has led to the development of specialized, domain-enhanced LLMs like RepairLlama and Hackmentor, which are tailored to tackle unique challenges within the sector. Conversely, closed-source models like advanced versions of ChatGPT can solve complex tasks via prompt engineering and chains-of-thought, even without dedicated cybersecurity training, though they lack the same level of internal visibility.

The careful selection and deployment of an LLM depend heavily on the specific security environment and the task at hand. While open-source models offer granular control and customization for deep analytical tasks, closed-source models might provide rapid, general assistance through powerful reasoning capabilities. Regardless of the architecture chosen, the principles of data governance must be strictly applied to prevent sensitive information from being leaked outside approved networks, maintaining the security of protected information even when employees are interacting with these powerful text generators.

The performance evaluation of LLM integration in cybersecurity must involve continuous monitoring using traditional metrics such as accuracy, F1 score, and detection rate. Furthermore, the integration with existing security processes, like analyzing system calls or Control Flow Graphs (CFGs), is essential to maximize effectiveness. Security teams must continuously challenge and refine their models by addressing adversarial or obfuscated samples, ensuring the model remains resilient against evolving attacker techniques.

In conclusion, Large Language Models are rapidly becoming indispensable assets in the cybersecurity toolkit, offering unprecedented capabilities in parsing complexity, automating response, and augmenting human analysis. Applications spanning from automated threat detection and vulnerability analysis to specialized assistance in SOC workflows demonstrate their transformative potential. However, this power is mirrored by significant inherent risks, particularly those related to data poisoning, adversarial manipulation, and the potential for misuse in offensive operations. Mastering the integration of LLMs in cybersecurity hinges on translating complex AI science into actionable operational knowledge, enabling professionals to consistently manage an accelerating threat landscape while diligently addressing the ethical and safety challenges introduced by this powerful, emerging technology. The path forward demands continuous verification, process refinement, and a holistic approach that ensures these AI aids truly augment, rather than endanger, digital defense strategies.