The rise of Large Language Models (LLMs), such as GPT-4 and multimodal AI systems, is fundamentally reshaping the design, operation, and construction of digital infrastructure globally. LLM Data Center Infrastructure represents a seismic shift away from traditional server farms, characterized by unprecedented demands for computational power, specialized hardware, and innovative solutions for cooling and energy management. Enterprises that initially relied on API-based LLMs for proof-of-concept projects are now recognizing that large-scale deployment across operations necessitates dedicated, highly optimized infrastructure to remain cost-effective and competitive.

AI workloads, particularly the massive training and inferencing operations required by LLMs, differ from traditional enterprise computing in every imaginable way. Traditional data centers were engineered for rack-mounted, air-cooled servers, typically featuring lower rack densities and orchestration built around private cloud virtualization. The modern AI data center, conversely, is an industrial facility built around extreme density, high utilization, and massive parallelism, often requiring entirely new architectural approaches to function efficiently.

One of the most immediate and significant differentiators is the drastically higher rack density. While a conventional CPU server rack might draw 5 to 15 kW, modern AI racks housing high-performance GPU systems—like the NVIDIA H100/H200 or AMD MI300X—routinely require 40 to 120 kW or more. This immense concentration of power pushes thermal boundaries far beyond the limits of standard air-cooling systems, necessitating a complete transformation of the thermal management strategy within the facility.

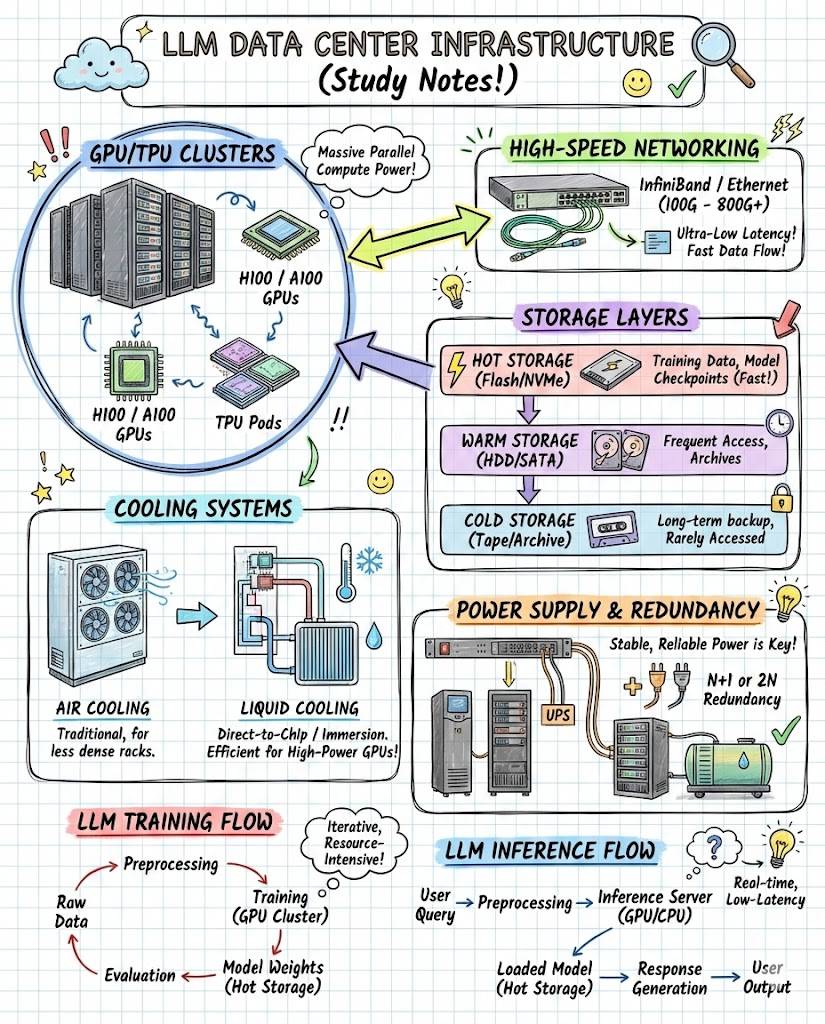

High-performance computing (HPC) is the core engine of the AI data center. These centers rely heavily on specialized AI accelerators, rather than general-purpose chips. GPUs, popularized by companies like Nvidia, are essential because they use massively parallel processing, breaking down complicated problems into countless smaller pieces that can be solved concurrently. Increasingly, data centers are also incorporating Neural Processing Units (NPUs) and Tensor Processing Units (TPUs) custom-built to speed up specific tensor computations critical for deep learning and real-time processing.

Unlike traditional data center servers that might experience peak loads during working hours, LLM training clusters frequently run at near 100% utilization for sustained periods—often days or weeks—to complete the training process of a foundation model. This continuous, maximum utilization creates sustained and predictable thermal loads that must be managed reliably. The economics of operating these facilities require high Model FLOPS Utilization (MFU) to justify the significant capital investment in hardware and specialized infrastructure.

The power demands of these massive facilities are staggering. A single hyperscale AI campus designed for LLMs can demand 200 to 1,000 MW of total power capacity, requiring the construction of multiple substations and often dedicated transmission lines. AI compute demand has grown so rapidly that it already exceeds grid availability and headroom in many prime data center markets, making power scarcity a primary constraint on growth and site selection.

This power scarcity is fundamentally changing where and how data centers are built. Site selection now prioritizes fast utility connection speeds, access to large land parcels for multi-campus builds, grid headroom for future growth, and favorable regulatory frameworks. To ensure stability and access, developers are deploying private power solutions, including gas turbines, massive battery energy storage systems (BESS), fuel-cell systems, and even microgrids, to ensure stable and high-quality power critical for sensitive GPU clusters.

Cooling is perhaps the most transformational component of AI infrastructure. The extreme thermal density of modern GPU racks necessitates a move away from conventional air-cooling toward highly efficient, often proprietary liquid solutions. These advanced cooling systems are vital for supporting racks up to 120 kW while simultaneously reducing the fan power required for operation, thus improving the overall power usage effectiveness (PUE) of the facility.

Liquid cooling methods, particularly direct-to-chip cooling, circulate fluid directly over the hottest components, offering superior heat extraction. For the highest densities, immersion cooling—where hardware is submerged in a non-conductive dielectric fluid—provides extreme density support, ultra-high efficiency, and significantly low noise and vibration. While liquid cooling adds capital expenditure, estimated at around an additional one million dollars per megawatt for turnkey developments, these costs are typically passed to tenants due to the massive performance gains realized.

The network fabric is often dubbed the ‘secret weapon’ behind optimized LLM performance. AI workloads require an ultra-high-speed, ultra-low-latency network to facilitate the massive parallel synchronization needed across thousands of interconnected accelerators. Traditional enterprise networking traffic patterns are predominantly north-south (client-server), whereas LLMs generate overwhelming volumes of east-west traffic (GPU-to-GPU communication).

To meet these requirements, AI-ready networks rely on specialized high-speed interconnects like NVLink, InfiniBand (NDR/XDR), and 400G/800G Ethernet fabrics. Crucially, these fabrics must be lossless and leverage technologies like Remote Direct Memory Access (RDMA) to ensure that the massive data transfers required for training do not suffer from congestion or latency spikes, which can severely compromise training time and model accuracy.

The logical architecture of the network is equally important, requiring sophisticated topologies beyond simple rack-to-rack connections. Common LLM topologies implemented within these clusters include Fat-tree, Dragonfly+, Torus/Mesh, and Cube-mesh architectures. These designs aim to minimize hop counts and maximize bandwidth between any two points in the massive accelerator cluster, ensuring that data movement keeps pace with the sheer computational capability of the chips.

Supporting this internal network infrastructure requires robust external connectivity. AI data centers incorporate high-capacity fiber pathways, dense fiber trays, multiple redundant fiber routes, and large-scale optical networking solutions. This capacity is essential for moving petabytes of training data into the facility and connecting the massive computing clusters to the broader internet and cloud ecosystems they serve.

The complexity of the hardware architecture extends beyond the processors themselves to encompass storage systems. An advanced storage architecture is necessary, often comprising a layered approach. This includes all-flash NVMe storage for the fastest data access, large JBOF (Just a Bunch Of Flash) enclosures, and petascale storage systems optimized for software-defined data centers, all working in concert to feed the high-speed accelerators efficiently.

The concept of “co-design” is paramount in LLM infrastructure planning. This involves jointly optimizing hardware components—such as FLOPS capability, High Bandwidth Memory (HBM) capacity, and network topology—alongside software and optimization strategies like parallelism techniques. Achieving high Model FLOPS Utilization (MFU) often requires complex optimization choices, as suboptimal configurations can lead to substantial performance penalties, wasting precious compute resources.

LLM clusters at scale represent the zenith of this infrastructure. These are systems involving thousands of GPUs engaged in massive parallel synchronization, often utilizing specialized hardware collectives to manage inter-GPU communication efficiently. The stringent requirements for these clusters include extreme density, liquid cooling integration, and networking that ensures ultra-low latency across the entire synchronized fabric.

The two main operational phases of AI—training and inferencing—place distinct demands on the data center. Training, which involves analyzing vast datasets to create the model, is characterized by high, sustained utilization and a focus on computational throughput. Inferencing, where the LLM responds to user prompts, requires real-time, low-latency processing, making proximity to the end-user (Inference Zones) and optimized network connections critical.

Model serving infrastructure for inferencing requires memory-optimized nodes and layered storage to quickly retrieve model weights. Furthermore, effective deployment often relies on multi-cluster balancing and orchestration layers that can efficiently allocate resources. This requires sophisticated software platforms specifically designed for AI workloads, often replacing or overlaying legacy virtualization solutions.

Organizations deploy LLM infrastructure using two primary models: hyperscale and colocation. Hyperscale data centers, owned and operated by major cloud providers, are immense facilities engineered for extreme scalability and large-scale generative AI workloads. Colocation facilities allow other businesses to rent physical space, power, and bandwidth, enabling them to house their own AI chips and benefit from hyperscale capabilities without the major capital investment of building their own facility.

The investment landscape has shifted significantly, with long-term build-to-suit leases becoming common, particularly with hyperscalers who commit to large-scale, AI-first campuses. These new developments involve securing large amounts of power and building accelerator-optimized halls with custom power distribution and specialized cooling loops. This increased demand, combined with grid bottlenecks and long development lead times, ensures that demand continues to significantly outstrip supply in primary markets.

Security and resilience are non-negotiable features of AI data centers, particularly given the value of proprietary AI research and model intellectual property (IP). Physical security measures are rigorous, including private cages, mantrap entry systems, and biometric verification. Digitally, infrastructure requires cluster segmentation, secure GPU scheduling, hardware root-of-trust enforcement, and comprehensive east-west traffic monitoring to ensure data integrity and prevent unauthorized access within the parallel computing environment.

Moreover, resilience is built into the design through extensive redundancy, such as N+1 or N+N redundancy for power and cooling loops, fiber route diversity, and automated failover for complex network fabrics. A poorly configured system or a failure in the chemical or physical process, such as a cooling interruption, can lead to severe performance degradation or total operational failure, underscoring the need for meticulous engineering and constant process control mechanisms.

The massive infrastructure transformation also necessitates a significant workforce shift. Data center teams must transition from managing traditional server environments to operating AI-optimized infrastructure, which includes expertise in GPU cluster management, high-bandwidth networking, and complex specialized cooling systems. After years of relying on cloud services, many organizations lack the internal data center expertise required to manage these demanding, bespoke physical environments effectively.

Network architects face the unique challenge of designing for AI-first traffic patterns and high-throughput demands that are fundamentally different from traditional enterprise networking. They need deep expertise in ultra-low latency technologies, lossless fabrics, and sophisticated interconnect solutions like InfiniBand. Furthermore, cost engineers must master new financial models that optimize hybrid compute portfolios, factoring in GPU utilization rates, inference economics, and complex cost trade-offs.

Looking ahead, infrastructure evolution continues to accelerate. The focus is moving toward custom silicon integration, deploying specialized processors beyond general-purpose chips. Emerging technologies like neuromorphic computing, designed for pattern recognition, and optical computing, aimed at energy-efficient data processing, are already being integrated into future designs, requiring adaptable and modular data center construction.

The eventual integration of quantum computing will likely introduce another paradigm shift in data center design. Quantum systems demand infrastructure vastly different from current requirements, including highly specialized cooling (cryogenics), advanced form factors, and extreme sensitivity controls to manage noise and temperature fluctuations. Managing this future hybrid architecture, encompassing traditional, neuromorphic, and quantum components, will require new categories of operational expertise and orchestration tools.

To ease the strain on centralized data centers, there is growing emphasis on ‘LLMs on the edge’—running AI systems natively on devices like PCs, smartphones, and tablets. This approach, which involves pruning models to reduce their parameter count (e.g., from 175 billion down to two billion), offers an alternative path to manage the exponential growth of AI processing needs and mitigate the risk of overwhelming current data center capacity.

LLMs on the edge provide several benefits, including reduced latency for querying, enhanced user privacy by keeping data local, and improved reliability. As smaller LLMs gain momentum and hardware prices for specialized edge accelerators decline, this distributed architecture promises to reduce the overall amount of AI processing that needs to be handled by massive, power-intensive, centralized data center factories.

In summary, the foundational framework of LLM data center infrastructure requires a deeply synthesized understanding of chemical, electrical, and physical engineering, fluid dynamics, and cutting-edge silicon architecture. From mastering the intricacies of liquid cooling and designing petascale storage systems, to the precise engineering of gigawatt power capacity and ultra-low-latency network fabrics, every component must be integrated and vital to consistently deliver the safe, high-quality computational power that underpins the future of artificial intelligence.