The relentless demand for computational power, driven by advancements in Artificial Intelligence, Machine Learning, High-Performance Computing (HPC), and cloud infrastructure, has propelled data center density to unprecedented levels. This surge in power density directly translates to a massive generation of heat, which air-cooling methodologies are increasingly struggling to manage efficiently or economically. Consequently, data center liquid cooling architecture has transitioned from a niche solution for supercomputers to a critical, mainstream necessity for modern enterprise and hyperscale facilities.

Liquid cooling, broadly defined, involves using a fluid—typically water or a specialized dielectric coolant—to transfer heat away from IT components far more effectively than air. Water possesses a heat capacity approximately 3,500 times greater than air, making it an inherently superior medium for thermal management. This fundamental difference is the catalyst behind the architectural shift occurring across the industry.

Traditional air-cooling architecture relies on computer room air conditioners (CRACs) or handlers (CRAHs) to circulate chilled air through raised floors or dedicated aisles. While effective for densities under 10 kW per rack, modern racks frequently exceed 30 kW, sometimes reaching 100 kW or more. At these high densities, air becomes insufficient; it cannot be pushed through the dense server components fast enough, leading to hot spots, increased fan power consumption, and ultimately, thermal throttling or failure of equipment. Liquid cooling architectures directly address this limitation by placing the cooling medium much closer to the heat source.

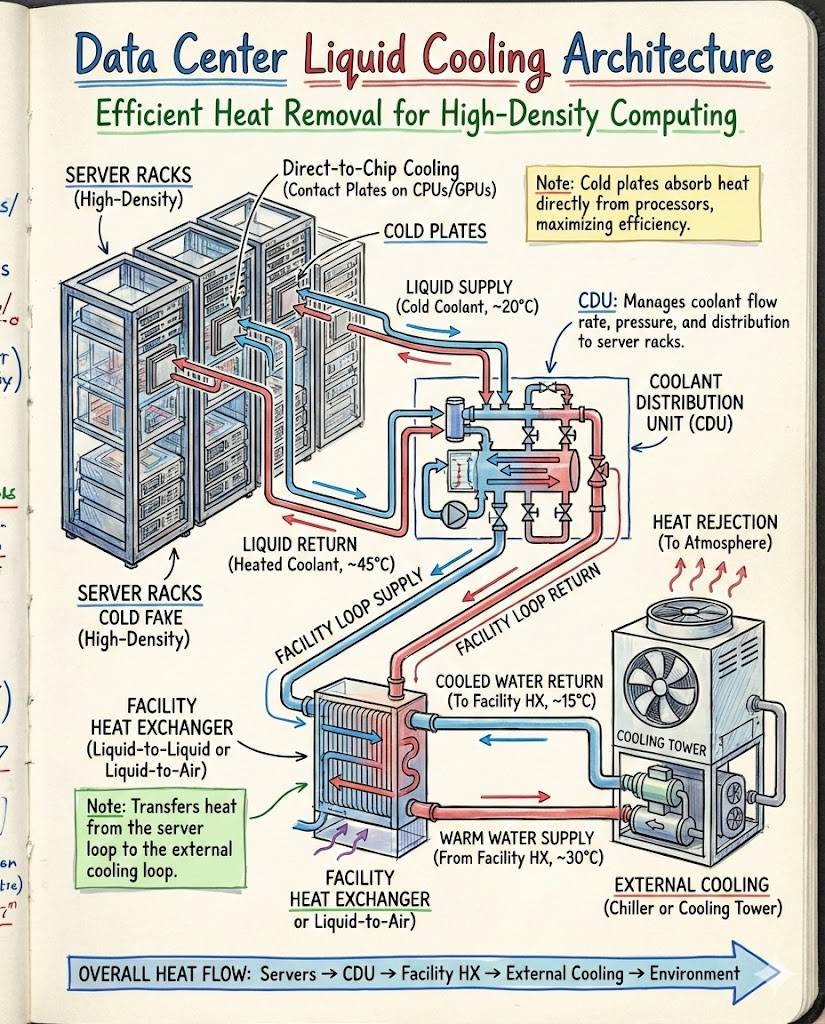

Liquid cooling is generally categorized into two primary architectures: direct-to-chip (DTC) cooling and immersion cooling. DTC cooling, also known as warm water cooling or cold plate cooling, utilizes specialized cooling blocks (cold plates) mounted directly onto the highest heat-generating components, such as CPUs, GPUs, and memory modules. The liquid, often conditioned water or a water/glycol mixture, flows through these cold plates, absorbing heat before returning to a central heat exchange unit.

The beauty of the DTC architecture lies in its targeted application. Since 70% to 80% of a server’s heat is generated by the processor and memory, cooling these components directly leaves the remaining, lower-density heat manageable by residual or supplementary air circulation, or increasingly, via rear-door heat exchangers. DTC systems typically operate with warmer water temperatures (e.g., 40°C to 50°C), which allows for the use of “free cooling” techniques for a much greater portion of the year, drastically reducing or eliminating the need for mechanical chillers. This improved operating temperature flexibility is a key driver in achieving Power Usage Effectiveness (PUE) ratios approaching 1.05 or lower.

Implementing a DTC architecture requires a sophisticated infrastructure involving primary and secondary fluid loops. The primary loop often manages bulk heat rejection (connecting to dry coolers, cooling towers, or external heat sinks). The secondary loop, operating at lower pressure, connects directly to the server racks via quick-connect, drip-less couplings. Central to this implementation is the Cooling Distribution Unit (CDU). The CDU acts as the interface, managing the flow, pressure, temperature, and monitoring of the liquid delivered to the racks. It includes pumps, filtration mechanisms, and a heat exchanger that transfers heat from the IT secondary loop fluid to the building’s primary facility loop.

Immersion cooling represents a more radical departure from traditional data center design. In this architecture, entire servers—or even entire racks—are submerged into a thermally conductive, non-electrically conductive (dielectric) fluid. There are two major subtypes: single-phase and two-phase immersion cooling.

Single-phase immersion utilizes a fluid that remains in its liquid state throughout the cooling cycle. The fluid circulates within the tank, absorbs heat, and then passes through a liquid-to-liquid heat exchanger (often located within the tank itself or adjacent to it). These fluids are typically engineered mineral oils or synthetic fluids designed for optimal heat transfer and material compatibility. The key advantage here is the complete elimination of server fans and the dramatic uniformity of component temperatures, which can extend hardware lifespan.

Two-phase immersion cooling employs a specialized fluid with a very low boiling point. As the server components heat up, the fluid boils, turning into vapor. This vapor rises to a condenser coil at the top of the tank, where it reverts back into a liquid state and drips back down onto the submerged components. This process leverages the latent heat of vaporization, making it exceptionally efficient at rapidly removing massive amounts of heat. While highly effective, two-phase systems involve more complex fluids that are often proprietary and can be expensive, requiring careful handling and containment.

The architectural planning for immersion cooling differs substantially from DTC. Immersion requires specialized tanks or tubs, and the entire server infrastructure must be adapted, often requiring the removal of hard drives or batteries which cannot tolerate submergence in certain fluids. The data hall environment also changes; noise is drastically reduced due to the absence of server fans, and air flow management becomes irrelevant within the tank boundaries. However, safety protocols related to handling the dielectric fluids and ensuring system containment become paramount.

Regardless of whether DTC or immersion is chosen, liquid cooling architectures necessitate meticulous attention to plumbing, materials science, and leak detection. Plumbing materials must be compatible with the chosen coolant to prevent corrosion or degradation. Leak detection systems, often involving sensors integrated into the rack base or server chassis, must provide immediate and precise alerts to mitigate potential damage to expensive IT equipment.

A significant benefit driving the adoption of these architectures is substantial improvement in energy efficiency. By moving cooling closer to the source and utilizing warmer operating temperatures, liquid cooling dramatically reduces the energy expended on cooling mechanisms, which historically accounts for up to 40% of a data center’s total energy usage. This reduction is reflected in the PUE metric, making facilities greener and cheaper to operate.

Furthermore, liquid cooling facilitates greater rack density. Previously, air-cooling dictated the spacing and layout of IT equipment; liquid cooling removes this barrier, enabling data centers to pack significantly more computing power into the same physical footprint. This consolidation is critical for urban data centers where space is a premium and for HPC clusters that require close proximity of computing nodes.

The architectural evolution also opens the door to effective heat reuse. Because the coolant often returns at higher temperatures (e.g., 45°C to 60°C in DTC systems), this waste heat is now warm enough to be economically leveraged for other purposes, such as heating office spaces, domestic hot water, or even supplying heat to district heating networks. This turns a waste product into a valuable resource, further improving the overall sustainability and total cost of ownership of the data center operation.

However, the transition to liquid cooling architectures is not without complexity. The initial capital expenditure for specialized components like CDUs, cold plates, tanks, and non-drip connectors can be high. Furthermore, integrating liquid cooling into existing data centers (a brownfield deployment) poses unique challenges related to retrofitting infrastructure, managing compatibility between legacy air-cooled equipment and new liquid-cooled systems, and training technical staff in fluid dynamics and specialized maintenance procedures.

The industry is currently seeing the rise of hybrid architectures as a practical solution. Many facilities deploy DTC cooling for their highest power-density racks (e.g., AI/GPU clusters) while maintaining air cooling for less intensive infrastructure (e.g., storage or general purpose servers). This strategy allows data centers to maximize efficiency where it matters most, manage transitional risks, and spread capital investment over time.

Standardization is slowly catching up to innovation in liquid cooling. Initiatives aim to harmonize interfaces and protocols to ensure that servers from various vendors can seamlessly integrate with different liquid cooling infrastructures. This includes defining standardized CDU capabilities, cold plate attachment methods, and fluid specifications, which will be essential for widespread adoption and marketplace competition.

In conclusion, data center liquid cooling architecture represents a paradigm shift necessitated by the physics of heat generation in modern computing. Whether through precise direct-to-chip cold plates or comprehensive immersion tanks, these systems offer unparalleled efficiency gains, density enablement, and sustainability advantages over traditional air cooling. Mastering the selection, deployment, and operation of CDUs, pump systems, and specialized fluids is now central to the operational excellence of any facility aiming to support the next generation of high-power computing workloads. The future of the data center is liquid, moving thermal management from an expensive necessity to a highly integrated, efficient utility.

The ongoing development in thermal management materials, particularly the refinement of dielectric fluids for immersion cooling, continues to reduce barriers to entry, addressing concerns over longevity and cost. Newer generations of fluids are being engineered to have lower environmental footprints and better thermal properties across a wider range of operating temperatures, increasing the adaptability of immersion systems in diverse geographical locations with varying climate conditions. This continuous improvement ensures that liquid cooling remains a dynamic and increasingly viable option for all scales of data center operations, from edge computing nodes to hyper-scale cloud facilities.

Furthermore, the maintenance and operational routines are becoming specialized. While air cooling primarily involves filter replacement and air handler upkeep, liquid cooling introduces procedures for maintaining fluid purity, managing pump redundancy, and validating the integrity of quick-connect couplings and piping networks. Successful liquid-cooled facilities invest heavily in training their facility managers and IT staff to handle these new operational complexities, treating the cooling system as an integrated, mission-critical utility rather than a separate building system.

The selection process for a liquid cooling architecture must be holistic, beginning with a detailed analysis of the expected power density profile of the IT load. Facilities planning for future expansion must choose an infrastructure that can scale easily, often favoring modular CDU deployments that can be added incrementally as the IT load grows. This careful front-end planning prevents expensive retrofitting down the line and ensures the architecture aligns with the business’s long-term technology strategy, particularly regarding anticipated AI and HPC deployments.

Security and risk mitigation are also fundamentally tied to the liquid cooling architecture. While the risk of catastrophic water damage is a frequent, though often overstated, concern, modern systems incorporate multiple layers of defense: highly secure, closed-loop designs, pressure monitoring, and advanced leak detection integrated directly into the infrastructure management software. Comparing this contained risk against the common failure point of air-cooled environments—thermal runaway due to air flow obstruction or CRAC failure—often shows liquid cooling systems to be highly resilient when properly deployed.

Finally, the economic analysis extends beyond just PUE. Total Cost of Ownership (TCO) assessments for liquid cooling must incorporate reduced server fan failure rates, increased component reliability due to lower temperature variability, potential revenue from heat reuse, and reduced real estate costs enabled by higher density. When these factors are aggregated, liquid cooling often proves to be the most cost-effective solution for supporting compute-intensive workloads over the operational lifespan of the data center.