The rise of Artificial Intelligence (AI) has fundamentally reshaped the landscape of digital defense, necessitating the development and adoption of robust AI Cybersecurity Frameworks. These frameworks are not merely updates to existing security protocols; they represent a holistic, integrated strategy designed to govern, protect, detect, and respond to threats within environments where AI is both a critical asset and a potential vulnerability.

An AI Cybersecurity Framework serves as a structured set of guidelines, standards, and best practices aimed at securing the entire AI lifecycle—from data ingestion and model training to deployment and continuous operation. Its primary objective is two-fold: first, to leverage AI tools to enhance traditional cybersecurity defenses, and second, to protect the AI systems themselves from targeted attacks, such as model poisoning, data exfiltration, or adversarial evasion.

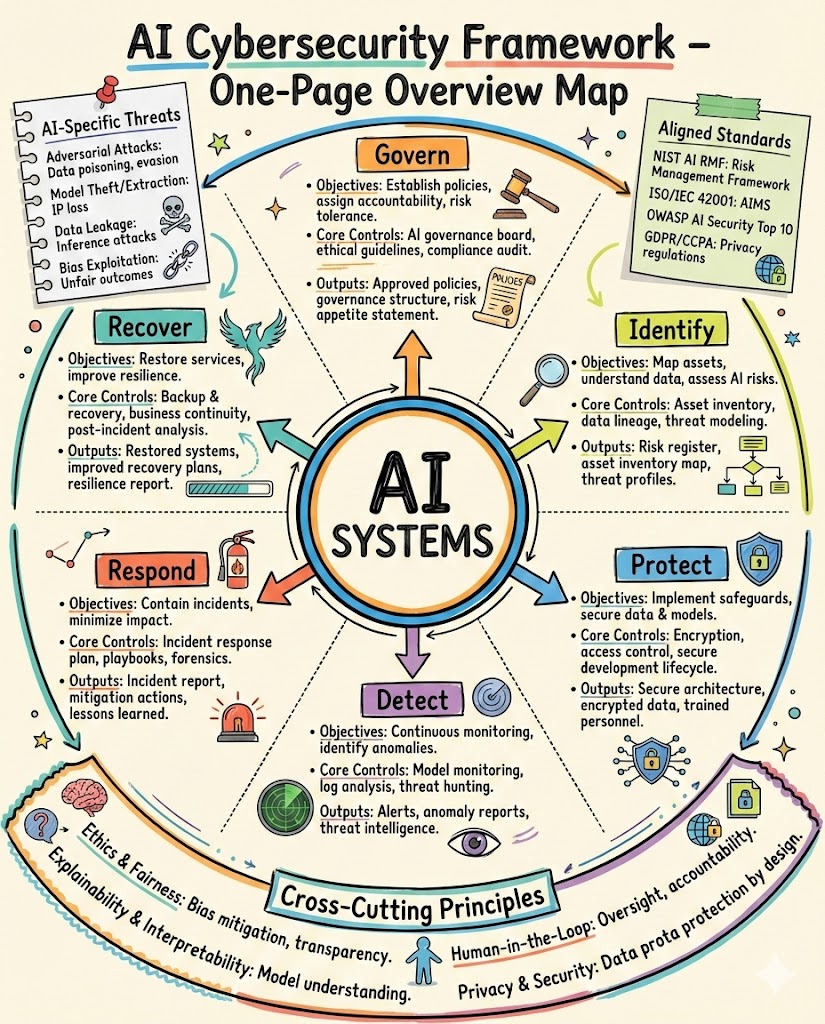

The foundation of any effective AI framework rests upon robust governance. This pillar mandates clear policies regarding data handling, model integrity, and ethical AI deployment. Governance ensures regulatory compliance, such as adherence to GDPR, HIPAA, or emerging AI-specific regulations, and establishes accountability for AI security risks. Crucially, it involves conducting comprehensive risk assessments focused on AI-specific vectors, classifying data sensitivity, and defining acceptable levels of model bias and performance drift.

Central to the framework’s structure are five key functional areas, often adapted from established standards like the NIST Cybersecurity Framework: Identify, Protect, Detect, Respond, and Recover, all viewed through an AI-centric lens.

In the Identify function, the focus is on creating a clear inventory of all AI models, training data sets, deployment environments, and associated supply chains. Understanding the “attack surface” of AI involves mapping dependencies and vulnerabilities in the algorithms themselves, including potential weaknesses in open-source components or third-party transfer learning models. This involves rigorous documentation of model architecture and performance metrics under various conditions.

The Protect function details the necessary safeguards to ensure the confidentiality, integrity, and availability of AI systems. This includes implementing access controls specific to data scientists and model operators, securing the training environment against unauthorized data modification (which could lead to model poisoning), and encrypting data both in transit and at rest. For the models themselves, protection requires techniques like differential privacy during training or secure multi-party computation to shield sensitive weights and parameters.

AI dramatically enhances the Detect function by enabling highly sophisticated threat intelligence and anomaly detection. AI-powered Security Information and Event Management (SIEM) systems use machine learning algorithms to analyze massive streams of log data, identifying behavioral patterns that signify an attack far faster than traditional rule-based systems. These algorithms excel at spotting zero-day attacks and insider threats by establishing baselines of normal network and user activity and flagging significant deviations. This proactive detection capability is essential for managing complex, ephemeral threats.

When a threat is detected, the Respond function dictates the rapid containment and mitigation strategy. AI-powered Security Orchestration, Automation, and Response (SOAR) platforms are critical here. These tools automate initial response actions, such as isolating compromised endpoints, revoking access credentials, or rerouting traffic, reducing the time an attacker has to cause damage. For AI models specifically, the response plan must include protocols for model rollback or temporary suspension if the model is deemed compromised or exhibiting erratic, malicious behavior due to an adversarial attack.

The Recover function focuses on restoring system functionality and data integrity after an incident. This includes robust backup procedures for critical training datasets and model checkpoints. Post-incident analysis is vital, often employing forensic AI tools to determine the precise nature of the attack, identifying if it was a data breach, a model manipulation event (like inference attacks), or denial-of-service against the AI service itself. Learning from the incident informs framework improvements, completing the continuous improvement cycle.

However, an AI Cybersecurity Framework must equally address the unique threat of Adversarial AI. Attackers are increasingly leveraging AI to craft sophisticated, undetectable attacks, or directly targeting the integrity of existing AI defenses. Adversarial examples—subtly modified inputs designed to fool a machine learning model into making an incorrect classification—pose a profound threat to areas like facial recognition, malware detection, and automated driving systems. A comprehensive framework includes strategies for hardening models against these tactics, utilizing techniques such as adversarial training, input sanitization, and ensemble methods.

Model poisoning is another grave concern where an attacker introduces malicious data into the training set, subtly manipulating the model’s future decisions. This requires stringent data validation pipelines and continuous monitoring of data sources to ensure trustworthiness and provenance. The framework must mandate that the supply chain of data, features, and pre-trained components is auditable and secure.

The operationalization of such a framework presents significant technical and organizational challenges. Integrating the framework requires significant investment in specialized talent—individuals skilled in both data science and cybersecurity. Furthermore, the inherent “black box” nature of deep learning models can make vulnerability assessment difficult; understanding why a model failed or why it made a specific decision is essential for forensic analysis but often computationally complex. Transparency and explainability (XAI) tools are therefore crucial components of the framework, turning opaque models into defensible assets.

In conclusion, an AI Cybersecurity Framework is indispensable for any modern organization leveraging machine intelligence. It moves beyond traditional perimeter defenses to address the data, models, and algorithms that form the core of AI systems. By institutionalizing governance, protecting model integrity, enhancing detection through AI-driven insights, automating response, and building recovery resilience, the framework ensures that organizations can harness the transformative power of AI securely and responsibly, maintaining public trust and regulatory compliance in an ever-evolving threat landscape.

Future iterations of these frameworks will place greater emphasis on continuous assurance, utilizing automated auditing tools to monitor model health in real-time for drift or anomalous behavior. They must also rapidly adapt to new computational paradigms, such as quantum computing risks, and the widespread adoption of edge AI, where security challenges are amplified by distributed deployment environments. Mastering the AI Cybersecurity Framework is not just about adopting new tools; it is about adopting a new security philosophy that recognizes the dual-use nature of AI in both defense and attack, ensuring that the benefits of machine learning are realized without compromising core security tenets.

The scope of the framework extends to managing the complexity introduced by hybrid cloud environments, where AI training may occur on-premises while deployment happens across multiple public cloud providers. This necessitates standardized security policies and unified identity management across fragmented infrastructures. The framework acts as the connective tissue, providing a singular, comprehensive security blueprint.

Furthermore, attention must be paid to the ethical dimension of AI security. A framework must prevent AI systems from being manipulated to propagate misinformation, exhibit discriminatory bias, or compromise human rights. Security and ethics become inseparable under this new paradigm, demanding checks and balances within the development pipeline to ensure societal and organizational values are maintained, even under duress from malicious actors.

Finally, continuous education and awareness training are critical components. Personnel, from executive leadership to technical operators and data scientists, must understand their roles in maintaining the security posture defined by the AI Cybersecurity Framework, recognizing that human error remains a primary vulnerability point. The framework, therefore, is as much a technical document as it is a cultural mandate for security consciousness within the age of artificial intelligence.